ID |

Date |

Author |

Group |

Subject |

|

224

|

08 Dec 2024, 02:49 |

J�rg Hallmann | MID | Shift summary | successful measurements of elastomer, gelatine & bio samples

issue:

beam loss at about 2:45 due to communication issues with accelerator modules |

|

223

|

07 Dec 2024, 02:04 |

J�rg Hallmann | MID | Shift summary | data collection of bubbles in elastomers (good progress)

issue: beam loss in the tunnel during the day - experts checked the situation and the situation improved during the evening |

|

222

|

06 Dec 2024, 07:49 |

Johannes Moeller | MID | Stability Issue | And since the 4th there are some instabilities in the vacuum levels at M1 and M2 as well. |

| Attachment 1: Screenshot_from_2024-12-06_07-48-48.png

|

|

|

221

|

06 Dec 2024, 06:37 |

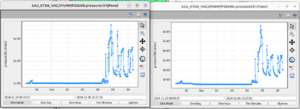

Johannes Moeller | MID | Stability Issue | The jumps keep repeating very frequently since yesterday afternoon. The overall drift of the beam seems to accalerate as well. |

| Attachment 1: Screenshot_from_2024-12-06_06-39-34.png

|

|

|

220

|

06 Dec 2024, 02:41 |

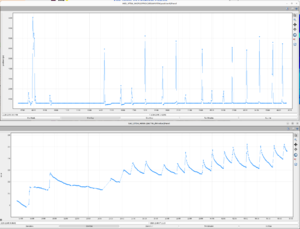

J�rg Hallmann | MID | Shift summary |

optimization of the timing of the involved components (laser, cameras, FEL)

generation of the first bubbles in water

issue

several times, the beam lost the position in the tunnel - it disappeared on the last tunnel imager. based on the motor parameter of the mirrors, no motion caused this. the position information of the XGM also did not show any strange values

|

| Attachment 1: 2024-12-06Bubble0003.png

|

|

|

219

|

05 Dec 2024, 19:47 |

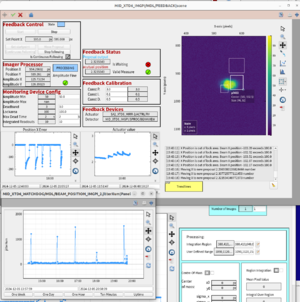

Alexey Zozulya | MID | Stability Issue | Today we have observed multiple large disturbances to beam stability. These can be characterised by large jumps in the horizontal beam position, which at its extreme amounts to a loss of beam from our behalf. M2 mirror feedbacks are able to return the beam to the initial position after ca. 10 minutes. Beam jumps have been approximately 1hr apart. We do not observe the beam on the XTD6 tunnel imager in this case, but do observe good lasing quality on the XGM. |

| Attachment 1: 2024-12-05_19-43-31.png

|

|

|

218

|

05 Dec 2024, 15:58 |

Jan Gruenert | PRC | Issue | additional comment to MESSAGE ID: 217

PRC was informed by MID at 8h50 about a new appeared limiation to about 60 pulses / train (MID operated previously at 120 pulses/train).

It turned out that this limitation came from the protection of the SA2_XTD1_ATT attenuator, in partiicular the Si attenuator.

MID had not noticed this before probably due to the fact that after bringing back A12 online, there was a bit higher pulse energy for MID, and MID changed the attenuation settings.

MID could continue working with the full required 120 pulses / train by inserting more diamond attenuators in XTD1 instead of Silicon and using additional attenuators downstream. |

|

217

|

05 Dec 2024, 09:18 |

Johannes Moeller | MID | Issue | MID operation limited to 55 bunches by SA2_XTD1_OPTICS_PROTECTION/MDL/ATT1 because of inserted Si 0.5mm. Seems a bit too tight for 1mJ@17keV, since diamond is not so usefull at this photon energy anymore. |

|

216

|

05 Dec 2024, 07:38 |

Jan Gruenert | PRC | Issue | Accelerator module issue

7h20: BKR/RC inform that there is was issue with the A12 Klystron filament which had caused a short downtime (6h15-6h22) and was taken offbeam.

Beam was recovered in all beamlines, but the pulse energy came back lower especially in SA1 (before 920uJ, after about 500 uJ) and higher fluctuations.

All experiments were contacted to find a convenient time for the required 15-30min without beam to recover A12, and all agreed to perform this task immediately.

The intervention actually lasted only a few minutes (7h38-7h42) and restored all intensities to the original levels (averages over 5min):

- SA1 (18 keV 1 bunch): MEAN=943.8 uJ, SD=56.08 uJ

- SA2 (17 keV 128 bunches): MEAN=1071 uJ, SD=148.3 uJ

- SA3 (1 keV 410 bunches): MEAN=347.5 uJ, SD=78.06 uJ

The issue seems resolved at 7h42. |

| Attachment 1: JG_2024-12-05_um_07.30.17.png

|

|

|

215

|

05 Dec 2024, 00:06 |

J�rg Hallmann | MID | Shift summary |

machine runs at 17 keV with about 1.2 mJ

achievements:

check of the Zyla & Shimadzu and trigger optimization or 1.1 MHz

installation of FEL shutter and optimization of position and opening time

optimization of instrument macros

issues

DAQ crashed when shimadzu is running with 2.2 MHz and 256 images - DOC worked on the problem in collaboration with DA as well as ITDM OCD. They will follow up on the problem when ITDM checked the issue (redmine ticket #214244)

|

|

214

|

04 Dec 2024, 15:34 |

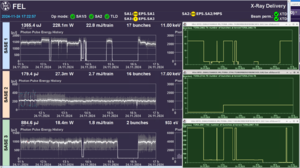

Jan Gruenert | PRC | Status | Beam delivery Status well after tuning finished (which went faster than anticipated). |

| Attachment 1: JG_2024-12-04_um_15.33.09.png

|

|

|

213

|

04 Dec 2024, 07:12 |

Tomas Popelar | SPB | Shift summary | X-ray delivery

19 keV, ~ 400 - 450 uJ, 1 - 128 pulses, stable delivery

Optical laser

N/A

Achievements/Observations

Lots of data collection, 659 runs total, lot of nice results, first obsertvations of 3D printing process

Issues

No major issue today |

|

212

|

01 Dec 2024, 23:07 |

Tokushi Sato | SPB | Shift summary | X-ray delivery

19 keV, ~450 uJ, 1 - 128 pulses, stable delivery

Optical laser

N/A

Achievements/Observations

Lots of data collection

Issues

No major issue today |

|

211

|

30 Nov 2024, 23:01 |

Tokushi Sato | SPB | Shift summary | X-ray delivery

19 keV, ~550 uJ, 1 - 128 pulses, stable delivery

Optical laser

N/A

Achievements/Observations

Sample test with both setup

Siemens star resolution measurement

Issues

No major issue |

|

210

|

26 Nov 2024, 09:47 |

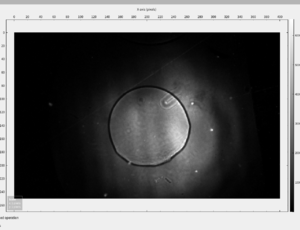

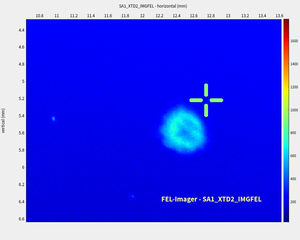

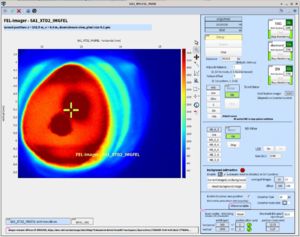

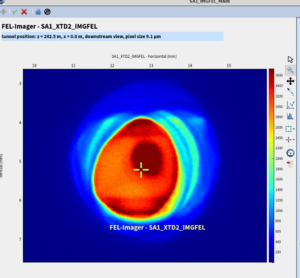

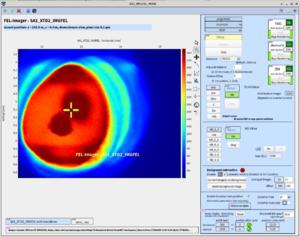

Andreas Koch | XPD | SA1 status | Concerning damage of the SA1 FEL imager, Operation-2024 eLog #206

The screen position of the YAG screen - when pressing the screen selection button - has been moved by 1 mm so that the damaged area is out of the field of view, see attached screenshots (damage visible under visible light exposure).

The screenshots show the cross-hair, i.e. its nominal beam position and the circular damaged area at its original position of the screen and the new position.

More information on the damage can be found in the damage report, entry #35:

https://docs.xfel.eu/share/page/site/xfelwp74/document-details?nodeRef=workspace://SpacesStore/fd862f30-c6a3-4a5e-9a65-817cbcebb2d9

Also, there is a comment in ttfinfo: https://ttfinfo.desy.de/XFELelog/show.jsp?dir=/2024/47&pos=2024-11-19T09:39:31 |

| Attachment 1: SA1_FEL_imager_-_damaged_spot_at_YAG_-_original_screen_position.png

|

|

| Attachment 2: SA1_FEL_imager_-_damaged_spot_at_YAG_-_new_screen_position.png

|

|

|

209

|

24 Nov 2024, 17:23 |

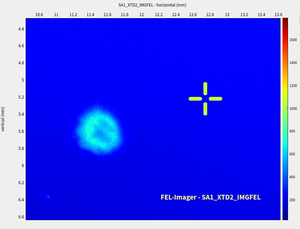

Jan Gruenert | PRC | Status | Status

All beamlines in operation.

The attachment ATT#1 shows the beam delivery of this afternoon, with the pulse energies on the left and the number of bunches on the right.

SA2 was not affected, SA1 was essentially without beam from 13h45 to 16h15 (2.5h) and SA3 didn't get enough (386) pulses in about the same period.

Beam delivery to all experiments is ok since ~16h15, and SCS is just not taking more pulses for other (internal) reasons. |

| Attachment 1: JG_2024-11-24_um_17.22.58.png

|

|

|

208

|

24 Nov 2024, 14:09 |

Jan Gruenert | PRC | Issue | Beckhoff communication loss SA1-EPS (again)

14h00 Same issue as before, but now EEE cannot clear the problem anymore remotely.

An access is required immediately to repair the SA1-EPS loop to regain communication.

EEE-OCD and VAC-OCD are coming in for the access and repair.

PRC update at 16h30 on this issue

One person each from EEE-OCD and VAC-OCD and DESY-MPC shift crew made a ZZ access to XTD2 to resolve the imminent Beckhoff EPS-loop issue.

The communication coupler for the fiber to EtherCat connection of the EPS-loop was replaced in SA1 rack 1.01.

Also, a new motor power terminal (which had been smoky and removed yesterday) was inserted to close the loop for regaining redundance.

All fuses were checked. However, the functionality of the motor controller in the MOV loop could not yet be repaired, thus another ZZ access

after the end of this beam delivery (e.g. next tuesday) is required to make the SRA slits and FLT movable again. EEE-OCD will submit the request.

Moving FLT is not crucial now, and given the planned photon energies as shown in the operation plan, it can wait for repair until WMP.

Moving the SA1 SRA slits is also not crucially mandatory in this very moment (until tuesday), but it will be needed for further beam delivery in the next weeks.

Conclusions:

In total, this afternoon SA1+SA3 were about 3 hours limited to max. 30 bunches.

The ZZ-access lasted less than one hour, and SA1/3 thus didn't have any beam from 15h18 to 16h12 (54 min).

Beam operation SA1 and SA3 is now fully restored, SCS and FXE experiments are ongoing.

The SA1-EPS-loop communication failure is cured and should thus not come back again.

At 16h30, PRC and SCS made a test that 384 bunches can be delivered to SA3 / SCS. OK.

Big thanks to all colleagues who were involved to resolve this issue: EEE-OCD, VAC-OCD, DOC, BKR, RC, PRC, XPD, DESY-MPC

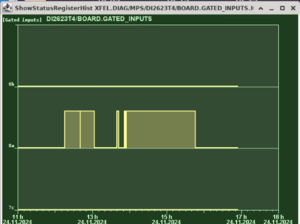

Timeline + statistics of safety_CRL_SA1 interlockings (see ATT#1)

(these are essentially the times during which SA1+SA3 together could not get more than 30 bunches)

12h16 interlock NOT OK

13h03 interlock OK

duration 47 min

13h39 interlock NOT OK

13h42 interlock OK

duration 3 min

13h51 interlock NOT OK

13h53 interlock OK

duration 2 min

13h42 interlock NOT OK

15h45 interlock OK

duration 2h 3min

In total, this afternoon SA1+SA3 were about 3 hours limited to max. 30 bunches.

The ZZ-access lasted less than one hour, and thus SA1/3 together didn't have any beam at all from 15h18 to 16h12 (54 min).

SA3 had beam with 29 or 30 pulses until start of ZZ, while SA1 already lost beam for good at 13h48 until 16h12 (2h 24 min).

SA3/SCS needed 386 pulses which they had until 11h55, but this afternoon they only got / took 386 pulses/train from 13h04-13h34, 13h42-13h47 and after the ZZ. |

| Attachment 1: JG_2024-11-24_um_16.54.02.png

|

|

|

207

|

24 Nov 2024, 13:47 |

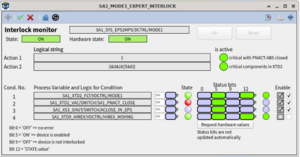

Jan Gruenert | PRC | Issue | Beckhoff communication loss SA1-EPS

12h19: BKR informs PRC about a limitation to 30 bunches in total for the north branch.

The property "safety_CRL_SA1" is lmiting in MPS.

DOC and EEE-OCD and PRC work on the issue.

Just before this, DOC had been called and informed EEE-OCD about a problem with the SA1-EPS loop (many devices red), which was very quickly resolved.

However, "safety_CRL_SA1" had remained limiting, and SA3 could only do 29 bunches but needed 384 bunches.

EEE-OCD together with PRC followed the procedure outlined in the radiation protection document

Procedures: Troubleshooting SEPS interlocks

https://docs.xfel.eu/share/page/site/radiation-protection/document-details?nodeRef=workspace://SpacesStore/6d7374eb-f0e6-426d-a804-1cbc8a2cfddb

The device SA1_XTD2_CRL/DCTRL/ALL_LENSES_OUT was in OFF state although PRC and EEE confirmed that it should be ON, an aftermath of the EPS-loop communication loss.

EEE-OCD had to restart / reboot this device on the Beckhoff PLC level (no configuration changes) and then it loaded correctly the configuration values and came back as ON state.

This resolved the limitation in "safety_CRL_SA1".

All beamlines operating normal. Intervention ended around 13h30. |

|

206

|

23 Nov 2024, 15:11 |

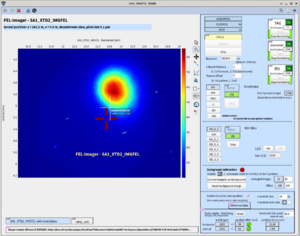

Jan Gruenert | PRC | Issue | Damage on YAG screen of SA1_XTD2_IMGFEL

A damage spot was detected during the restart of SA1 beam operation at 14h30.

To confirm that it is not something in the beam itself or a damage on another component (graphite filter, attenuators, ...), the scintillator is moved from YAG to another scintillator.

ATT#1: shows the damage spot on the YAG (lower left dark spot, the upper right dark spot is the actual beam). Several attenuators are inserted.

ATT#2: shows the YAG when it is just moved away a bit (while moving to another scintillator, but beam still on YAG): only the beam spot is now seen. Attenuators unchanged.

ATT#3: shows the situation with BN scintillator (and all attenuators removed).

XPD expert will be informed about this new detected damage in order to take care. |

| Attachment 1: JG_2024-11-23_um_14.33.06.png

|

|

| Attachment 2: JG_2024-11-23_um_14.40.23.png

|

|

| Attachment 3: JG_2024-11-23_um_15.14.33.png

|

|

|

205

|

23 Nov 2024, 10:27 |

Jan Gruenert | PRC | Issue | SA1 Beckhoff issue

SA1 blocked to max. 2 bunches and SA3 limited to max 29 bunches

At 9h46, SA1 goes down to single bunch. PRC is called because SA3 (SCS) cannot get the required >300 bunches anymore. (at 9h36 SA3 was limited to 29 bunches/train)

FXE, SCS, DOC are informed, DOC and PRC identify that this is a SA1 Beckhoff problem.

Many MDLs in SA1 are red across the board, e.g. SRA, FLT, Mirror motors. Actual hardware state cannot be known and no movement possible.

Therefore, EEE-OCD is called in. They check and identify out-of-order PLC crates of the SASE1 EPS and MOV loops.

Vacuum and VAC PLC seems unaffected.

EEE-OCD needs access to tunnel XTD2. It could be a fast access if it is only a blown fuse in an EPS crate.

This will however require to decouple the North branch, stop entire beam operation for SA1 and SA3.

Anyhow, SCS confirms to PRC that 29 bunches are not enough for them and FXE also cannot go on with only 1 bunch, effectively it is a downtime for both instruments.

Additional oddity: there is still one pulse per train delivered to SA1 / FXE, but there is no pulse energy in it ! XGM detects one bunch but with <10uJ.

Unclear why, PRC, FXE, and BKR looking into it until EEE will go into the tunnel.

In order not to ground the magnets in XTD2, a person from MPC / DESY has to accompany the EEE-OCD person.

This is organized and they will meet at XHE3 to enter XTD2 from there.

VAC-OCD is also aware and checking their systems and on standby to accompany to the tunnel if required.

For the moment it looks that the SA1 vacuum system and its Beckhoff controls are ok and not affected.

11h22: SA3 beam delivery is stopped.

In preparation of the access the North branch is decoupled. SA2 is not affected, normal operation.

11h45 : Details on SA1 control status. Following (Beckhoff-related) devices are in ERROR:

- SA1_XTD2_VAC/SWITCH/SA1_PNACT_CLOSE and SA1_XTD2_VAC/SWITCH/SA1_PNACT_OPEN (this limits bunch numbers in SA1 and SA3 by EPS interlock)

- SA1_XTD2_MIRR-1/MOTOR/HMTX and HMTY and HMRY, but not HMRX and HMRZ

- SA1_XTD2_MIRR-2/MOTOR/* (all of them)

- SA1_XTD2_FLT/MOTOR/

- SA1_XTD2_IMGTR/SWITCH/*

- SA1_XTD2_PSLIT/TSENS/* but not SA1_XTD2_PSLIT/MOTOR/*

- more ...

12h03 Actual physical access to XTD2 has begun (team entering).

12h25: the EPS loop errors are resolved, FLT, SRA, IMGTR, PNACT all ok. Motors still in error.

12h27: the MOTORs are now also ok.

The root causes and failures will be described in detail by the EEE experts, here only in brief:

Two PLC crates lost communication to the PLC system. Fuses were ok. These crates had to be power cycled locally.

Now the communication is re-established, and the devices on EPS as well as MOV loop have recovered and are out of ERROR.

12h35: PRC and DOC checked the previously affected SA1 devices and all looks good, team is leaving the tunnel.

13h00: Another / still a problem: FLT motor issues (related to EPS). This component is now blocking EPS. EEE and device experts working on it in the control system. They find that they again need to go to the tunnel.

13h40 EEE-OCD is at the SA1 XTD2 rack 1.01 and it smells burnt. Checking fuses and power supplies of the Beckhoff crates.

14h: The SRA motors and FLT are both depending on this Beckhoff control.

EEE decides to remove the defective Beckhoff motor terminal because voltage is still delivered and there is the danger that it will start burning.

We proceed with the help of the colleagues in the tunnel and the device expert to manually move the FLT manipulator to the graphite position,

and looking at the operation plan photon energies it can stay there until the WMP.

At the same time we manually check the SRA slits, the motors are running ok. However, removing that Beckhoff terminal also disables the SRA slits.

It would require a spare controller from the labs, thus we decide in the interest of going back to operation to move on without being able to move the SRA slits.

14h22 Beam is back on. Immediately we test that SA3 can go to 100 bunches - OK. At 14h28 they go to 384 bunches. OK. Handover to SCS team.

14h30 Realignment of SA1 beam. When inserting IMGFEL to check if the SRA slits are clipping the beam or not, it is found after some tests with the beamline attenuators and the other scintillators that there is a damage spot on the YAG ! See next logbook entry and ATT#3. This is not on the graphite filter or on the attenuators or in the beam as we initially suspected.

The OCD colleagues from DESY-MPC and EEE are released.

The SRA slits are not clipping now. The beam is aligned to the handover position by RC/BKR. Beam handover to FXE team around 15h. |

| Attachment 1: JG_2024-11-23_um_12.06.35.png

|

|

| Attachment 2: JG_2024-11-23_um_12.10.23.png

|

|

| Attachment 3: JG_2024-11-23_um_14.33.06.png

|

|

|